Click to set custom HTML

As in other engineering discipline Any, software engineering HAS aussi some structured models for software development.

This document will Provide you with a generic overview about different software development methodologies ADOPTED by

contemporary software FIRMS. Read on to know more about the Software Development Life Cycle (SDLC) in detail.

Curtain Raiser

Like Any Other set of engineering products, software products are oriented aussi Towards the customer. Either it is market

driven or it drives the market. Customer Satisfaction and Customer Delight Were buzzwords Many decades ago.

Customer Co-creation is the new buzzword that's doing the rounds beens. Products That Are not customer or user friendly

Have No place in the market although They are engineered using the best technology. The experience of the product and

the participation of the customer in Creating the product is as crucial as the internal technology of the product.

Market Research

A market study is made to Identify a potential customer's need. This process is known as aussi market research. Here, the

Already Existing as possible and the need and potential needs That are available in a segment of the society are Studied

carefully. The market study is done based on a lot of Assumptions. Assumptions are the crucial factoring in the

development or inception of a product's development. Unrealistic Assumptions can because a nosedive in the Entire

venture. Though Assumptions are abstract, there shoulds be a move to economic development of tangible Assumptions to come up with a

successful product.

Research and Development

Once the Market Research is Carried out, the customer's need is Given to the Research & Development division (R & D) to

conceptualize a cost-effective system That Could Potentially solve the customer's needs in a Manner That is better than the

one ADOPTED by the Competitors at present. Once the conceptual system is Developed and tested in a hypothetical

environment, the development team takes control of it. The development team Adopts one of the software development

That Is Given below methodologies, develops the Proposed System, and Gives it to the customer.

The Sales & Marketing division starts selling the software to the available customers and simultaneously works to

economic development of a Niche That Could Potentially segment buy the software. In addition, the division assists aussi feedback from the

the customers to the developers and the R & D division to make value additions to be the product.

While Developing a software, the company outsourced the non-core activities to other companies who specialize in Those

activities. This ACCELERATES the software development process Largely. Some companies work on tie-ups to bring out a

highly matured product in a short period.

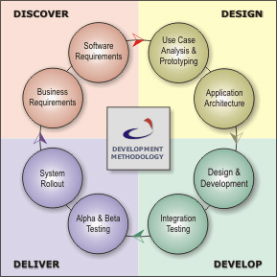

Popular Software Development Models

The Following are some basic popular models That Are ADOPTED by Many software development FIRMS

A. System Development Life Cycle (SDLC) Model

B. Prototyping Model

C. Rapid Application Development Model

D. Component Assembly Model

A. System Development Life Cycle (SDLC) Model

This is known as aussi Classic Life Cycle Model (or) Linear Sequential Model (or) Waterfall Method. This model Has the

Following activities.

1. System / Information Engineering and Modeling

As software is always of a wider system (or business), work begins by the Establishing Requirements for all system

items and then allocating some subset of These Requirements to software. This system view is essential When the

software must interface with other items Such as hardware, people and other resources. System is the basic and very

critical requirement for the existence of software in Any entity. So if the system is not in place, the system shoulds be

engineered and put in place. In some cases, to extract the maximum output, the system shoulds be re-engineered and

spruced up. Once the ideal system is engineered or tuned, the development team studies the software requirement for

the system.

2. Software Requirement Analysis

This process is known as aussi feasibility study. In this phase, the development team visits the customer and studies Their

system. They Investigate the need for automation in the software can Given system. By the end of the Feasibility

study, the team furnishes a Document That holds the different specific recommendations for the candidate system. It aussi

includes the staff assignments, costs, project schedule, target dates etc. .... The requirement gathering process is

Intensified and focussed specially on software. To Understand the Nature of the program (s) to be built, the system

engineer or "Analyst" must Understand the information domain for the software, as well as required function, behavior,

performance and interfacing. The essential purpose of this stage is to find the need and to define the problem That needs

to be solved.

3. System Analysis and Design

In this phase, the software development process, the software's overall structure and Its nuances are defined. In terms of

the client / server technology, the number of third needed for the package architecture, the database design, the data

structure design etc ... are all Defined in this phase. A software development model is created Malthus. Analysis and Design

are very crucial in the whole development cycle. Any glitch in the design stage Could be very expensive to solve in the

later course of the software development. Much care is taken falling on this phase. The logical system of the product is

Developed in this phase.

4. Code Generation

The design must be translated into a machine-readable form. The code generation step performs this task. If the design is

Performed in a detailed Manner, code generation can be accomplished without much complication. Programming tools

like compilers, interpreters, debuggers etc ... are used to generate the code. Different high level programming languages

like C, C + +, Pascal, Java are used for coding. With respect to the kind of application, the right programming language is

Chosen.

5. Testing

Once the code is generated, the software program testing begins. Different testing methodologies are available to

unravel the bugs COMMITTED That Were falling on the previous phases. Different testing tools and methodologies are Already

available. Some companies build Their own testing tools are tailor made for That Their own development operations.

6. Maintenance

The software will definitely change Undergo ounces it is Delivered to the customer. There can be Many Reasons for this

changes to Occur. Change Could Happen Because of some unexpected input values into the system. In addition, the

exchange in the system Could Directly affect the software operations. The software shoulds be Developed to Accommodate

That Could Happen exchange falling on the post implementation period.

B. Prototyping Model

This is a cyclic version of the linear model. In this model, the requirement oz analysis is done and the design for a

prototype is made, the development process gets started. Once the prototype is created, it is Given to the customer for

evaluation. The customer tests the package and gives his / her feed back to the developer who REFINES the product

selon the customer's exact expectation. After a finite number of iterations, the final software package is Given to the

customer. In this methodology, the software is as a result of Evolved periodic shuttling of information entre le

customer and developer. This is the MOST popular development model in the contemporary IT industry. Most of the

successful software products Have Been Developed using this model - as it is very difficult (even for a whiz kid!) to

comprehend all the Requirements of a customer in one shot. There are Many variations of this model skewed with respect

to the project management styles of the companies. New versions of a software product evolve as a result of prototyping.

C. Rapid Application Development (RAD) Model

The RAD modelis a linear sequential software development process emphasizes That year extremely short development

cycle. The RAD model is a "high speed" adaptation of the linear sequential model in rapid development Which is

Achieved by using a component-based approach building. Primarily used for information systems applications, the

RAD approach encompasses the Following steps:

1. Business modeling

The information flow is Modeled Among business functions in a way That answers the Following Questions:

What information drives the business process?

What information is generated?

Who Generates it?

Where does the information go?

Who processes it?

2. Data modeling

The information flow Defined as share of the business modeling stage is refined into a set of data objects are needed That

Support to the business. The characteristic (Called attributes) of each Stock object is APPROBATION and the Relationships between

thesis objects are defined.

3. Process modeling

The data objects in the data-Defined modeling stage are Transformed to Achieve the Necessary information flow to

Implement a business function. Processing the descriptions are created for adding, Modifying, deleting, or retrieving a

data object.

4. Application generation

The RAD model assumed the use of the RAD tools like VB, VC + +, Delphi etc ... Rather Than Creating software using

conventional third generation programming languages. The RAD model works to reuse Existing program components

(When possible) or create reusable components (when necessary). In all cases, automated tools are used to Facilitate

Construction of the software.

5. Testing and turnover

Since the RAD process emphasizes reuse, Many of the program components Already Have beens tested. This minimizes

the development and testing time.

D. Component Assembly Model

Object technologies Provide the technical framework for a component-based process model for software engineering.

The object oriented paradigm emphasizes the establishment of classes encapsulate That Both data and the algorithm That Are

used to manipulate the data. If Properly Implemented and designed, object oriented classes are reusable across different

applicationsand computer based system architectures. Component Assembly Model leads to software reusability. The

integration / assembly of the Already Existing software components accelerate the development process. Many nowadays

component libraries are available on the Internet. If the right components are Chosen, the integration is made much appearance

simpler.

Conclusion

All different software development models thesis Have Their Own advantages and disadvantages. Nevertheless, in the

contemporary business software evelopment world, the fusion of all methodologies thesis is incorporated. Timing is

very critical in software development. If a delay happens in the development phase, the market Could be taken over by

the competitor. Also if a 'bug' filled product is Launched in a short period of time (quicker than the Competitors), it may

affect the reputation of the company. So, there shoulds be a tradeoff entre le development time and the quality of the

product. Customers do not expect a bug free product purpose They expect a user-friendly product That They Can give a thumbs-

up to.

Modals Software Development Life Cycle?

Methodologies: -

Agile Agile methods-Promote Generally a disciplined project management process That encouraged frequent inspection

and adaptation, a leadership philosophy That encouraged teamwork, self-organization and accountability, a set of

Intended engineering best practices to allow for rapid delivery of high-quality software, and a business approach That

aligns development with customer needs and company goals.

Rad Modal: - Its Minimal planning in favor of rapid prototyping. The "planning" of software Developed using RAD is

interleaved with writing the software Itself. The Lack of extensive pre-planning allows software to be Generally written much

faster, and makes it Easier to changing requirements.

Modal Spiral: The spiral-methodology extends the waterfall model by Introducing prototyping. Generally it is

Chosen over the waterfall approach for large, expensive, and complicated projects.At a high-level, the steps in the spiral

model are as follows:

The new system requirements are as Defined in much detail as possible. This Usually Involves interviewing a number of

Representing all the external users or internal users and other aspects of the Existing System.

A preliminary design is created for the new system.

A first prototype of the new system is Constructed from the preliminary design. Usually this is a scaled-down system, and

Represents approximate year of the characteristics of the final product.

Waterfall Method:-The name of the waterfall lifecycle model comes from icts physical appearance, Shown in Figure, and

the process by Which the results from one phase flow into the next. An alternative name for this model is the "Big Bang"

Because working lifecycle APPEAR first results near the end of the process, scientists describe how something like the Big

Bang theory of how the universe was created. The waterfall model is simple, easy to learn, and easy to use.

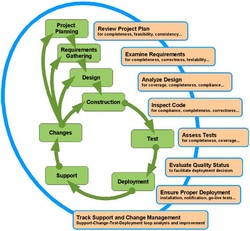

Modal V: - All the Necessary steps of Software Development Life Cycle (SDLC) are Followed. Most importantly, the stage

of Software Quality Assurance (SQA) is Executed with Utmost care. The life cycle used by us for application development

is as follows.

SDLC Phases?

The systems development life cycle (SDLC) is a conceptual model used in project management That Describes the

Involved in training year information system development project, from initial feasibility study through year of the service

completed application.

SDLC Phases: -

Schedule

Everything starts with a concept. It could be a concept of someone, or everyone. However, there are Those That do not

start out with a goal concept with a question, "What do you want?" they ask Thousands of people in a community or some

age group to Know What They Want and decided to create an answer. Aim it all goes back to schedule and conceptualization.

Requirement

In engineering, a requirement is a singular Documented need of what a Particular product or services or shoulds be

perform. It is MOST Commonly used in a formal sense in systems engineering or software engineering. It is a statement

That has identified Necessary attribute, capability, characteristic, or quality of a system in order for it to Have value and utility

to a user.

Design

Once the schedule and arguing with the manager or owner about the plane and somehow convincing em, it is time to

design or create a rough plane Regarding the software.

Implemantion

The first two stages are quite common in all SDLC models. However, things change starting on this course. When the

design and all the things you need That Have Been laid out, it is time to work on the plan. Some developers, Especially

Those That follow the standard plan of Developing Software will work on the plane and present em for approval.

Testing

Could this mean two things DEPENDING ON SDLC model year. The first kind of testing is the testing by actual users. This is

Usually done in implementation models Wherein Does not go with pre-testing with users. On the other hand, there are aussi

That uses testing professionals in the field. This testing is Aimed in the cleaning of all the software bugs altogether. For

That software are set for public release, the software is first tested by other developers who Were not in charge in Creating

the software.

Acceptance

When the software is released to be used by a company some, acceptance means clustering software is Implemented as the year

Could be added tool or the replacing another software That too has-been found wanting Effective years of use. On the other hand,

When the software is to the public Implemented new software Could be an added software for use. It is difficulty to change

They are public purpose software not closing Their ears to new software. So developers will Always Have a fighting chance in

the market as long as They Implement Good software for public use.

Maintinance

When the software is Implemented, It Does not mean que le software is good as it is. All models include SDLC

service since there are absolutely no way That will be a software working perfectly. Someone HAS to stay in the

this software to take a look and Ensure the program works perfectly. When the software is Implemented in

Either set up companies public.Software a call center or an e-mail service to address the Concerns of the consumer. Ace

We have in previous chapters Indicated Maintenance is quiet an easy task as long as the right product is used as food and

year in expected time frame. However, it is always a challenge When something goes wrong. The whole team might not be

there to help the developer so Addressing a major concern Could never be answered.

Advantage and Disadvantage of SDLC modals?

SDLC models Advantages & disadvantages

Advantages of Waterfall Model

1. Clear project objective.

2. Stable project requirements.

3. Progress of system is Measurable.

4. Strict sign-off requirements.

Disadvantages of Waterfall Model

1. Time consuming

2. Never backward (Traditional)

3. Little room for iteration

4. Difficulty responding to exchange

Advantages of Spiral Model

1. Avoidance of Risk is enhanced.

2. Strong approval and documentation control.

3. Implementation HAS priority over functions on.

4. Additional Functionality can be added at a later date.

Disadvantages of Spiral Model

1. Highly customized Limiting re-usability

2. Applied Differently for each Stock Application

3. Risk of not meeting budget or schedule

4. Possibility to end up as the Waterfall Implemented framework

Advantages of Prototype Model

1. Strong Dialogue between users and developers

2. Missing functions on Can Be Easily APPROBATION

3. Confusing or difficult functions can be APPROBATION

4. Requirements validation, implementation of Quick, incomplete, intended

functional, application

5. May generate specifications for a Production Application

6. Environment to resolve unclear objective

7. Encouraged innovation and flexible designs

Disadvantages of Prototype Model

1. Awarded Contract may be without Rigorous Evaluation of Prototype

2. Identifying non-functional items to document difficulties

3. Incomplete implementation because implementation may not be used to have the

full system was designed

4. Incomplete or inadequate problem analysis

5. Customer may be unacknowledged

6. Approval process and requirement is not strict

7. Requirements may change Significantly Frequently

Software requirement specification (SRS)?

Software Requirements Specification (SRS) is complete description of the behavior of the system to be Developed. It

includes a set of use cases describe all the interactions That the users with the software will Have. Use cases are aussi

known as functional requirements. In addition to use cases, the SRS aussi contains non-functional (or supplementary)

requirements. Requirements Requirements are nonfunctional Which imposes constraints on the design or implementation

(Such as Performance Requirements engineering, quality standards, and design constraints).

1. What Makes a Great Software Requirements Specification?

We have to keep in mind is not que les goal to create great specifications aim to create great products and great software.

Can you create a great product without a great specification? Absolutely! You can make your first one million aussi through the

lottery - but why take your chances? Systems and software these days are so complex That to embark on the design

before knowing what you are going to build is foolish and risky.

2. What are the benefits of a Great SRS?

ESTABLISH · The Basis for agreement entre le customers and the suppliers on what the software product is to do.

· Reduce the development effort.

· Provide a Basis for Estimating Costs and schedules.

· Provide a baseline for validation and verification.

· Facilitate transfer.

· Serve as a Basis for enhancement.

3. WHAT ARE THE CHARACTERISTICS OF A GREAT SRS?

· Correct

· Unambiguous

· Complete

· Consistent

· Ranked for importance and / or stability

· Verifiable

· Editable

· Traceable

4. DIFFERENCE BETWEEN A REQUIREMENT AND DESIGN SOFTWARE REQUIREMENT?

The SRS shoulds not include Any design requirements. However, this is a difficulty discipline. For example, Because of the

partitioning and the RTOS you are using Particular, and the hardware you are using Particular, you may require No. That task

use more than 1 ms of processing Prior to releasing control back to the RTOS. Although may be a true That requirement

and it Involves software and shoulds be tested - it is truly a design requirement and shoulds be included in the Software

Design Document or in the source code.

Consider the target audience for each Stock specification to Identify what goes into what documents.

Marketing / Product Management

Creates a product specification and Gives it to Systems. It shoulds Systems needs to define everything Specify the

product

Systems

Creates a System Specification and Gives it to Systems / Software and Mechanical and Electrical Design.

Systems / Software

Creates a Software Specification and Gives it to Software. It shoulds define everything needs to economic development of the Software

Software.

Malthus, the SRS shoulds Explicitly define everything gold (de preference) by reference to economic development of That software needs the

Software. References shoulds include the version number of the target document. Also, Consider using master documents

Which tools allow you to include other documents and Easily Access the full requirements.

software development life cycle

Evolutionary Development Model - A software development life cycle model

The waterfall model is viable for software products do not change That very much ounces They are specified. Purpose for software products That Have Their feature sets redefined falling on development of user feedback and Because other factoring, the traditional waterfall model is no longer considers.

- The EVO Evolutionary development model divides the development cycle into smaller, incremental waterfall models in Which users are ble to get access to the

product at the end of each Stock cycle.

- Feedback is provided by the users on the product schedule for the course of the next cycle and the development team Responds, Often by changing the product, plans, or processes.

- These are incremental cycles two to four weeks Typically in duration and continuous Until the product is shipped.

Benefits of Evolutionary Development Model

- Benefit not only business goal marketing results and internal operations as well.

- Use of EVO significant reduction in risk Brings for software projects.

- Reduce Costs by EVO can Providing a structured, disciplined avenue for experimentation.

- EVO allows the marketing department access to early deliveries, Facilitating development of documentation and demonstrations.

- Short, frequent cycles EVO have some distinct advantages for internal processes and people considerations.

- The cooperation and Flexibility required by each Stock EVO developer of Greater results in teamwork.

- Better fit the product to user needs and market requirements.

- Manage project risk with early cycle definition of happy.

- Uncover key issues early and careful focus Appropriately.

- Increase the opportunity to hit market windows.

- Accelerate sales cycles with early customer exposure.

- Increase management visibility of project progress.

- Increase productivity and product team motivation.

The Journey

At the Define stage of the DFSS methodology, we instigated the requirement drill down process of e-HOQ. The key activities Were:

At level 1 of HOQ-e: Stakeholder Analysis - We APPROBATION the principle roles and actors related to the transformation program eg the business users, business sponsors, and other Concerned parties. The e-HOQ year ensured exhaustive list of Roles and Responsibilities.

At level 2 of HOQ-e: We used the e-HOQ analysis to the core business functions of the stakeholders and Their Assessed against each Stock stakeholder importance, APPROBATION in level 1.

At level 3 of HOQ-e: We used the e-HOQ to inefficient drivers for Assessment of each Stock core business functions, APPROBATION in level 2.

At level 4 of HOQ-e: We used the e-HOQ to Identify high level solution to resolve the characteristics inefficient drivers, APPROBATION in level 3.

At level 5 of HOQ-e: We used the HOQ-e to start the process of the user requirements Deriving from the solution characteristics, APPROBATION in level 4.

At level 6 of the HOQ-e: We used the e-HOQ to kick off the refinement process of the user requirements, APPROBATION in level 5, into technical requirements.

Measure at the stage of the DFSS methodology, we derive the metrics (Referred to as Critical To Quality - CTQs) required to measure conformance to the quality of the Proposed SLAs gold solution. The high level quality attributes are articulated by the business stakeholders and we used the GQ (I) M to derive the share of the Measurable quality attributes Which are Critical To Quality Called (CTQs).

At level 7 of the HOQ-e: we employed the HOQ-e to the relationships entre le for Assessment Technical requirements, APPROBATION in level 6 of HOQ-e, and the CTQs derived by the GQ (I) M. At this level we how well for Assessment (defined by the CTQs) shoulds a function (defined by the technical requirement) performs. We are Malthus, ble to map the model with clear quality metrics with the technical requirements.

At the Analysis - Design stage of the DFSS methodology, we enter the design Wherein the technical requirements (level 7 of the HOQ-e) are translated into Testable Integration Architecture (TiA-e) models. These are the communication model or in terms UML component models of the Proposed solutions. In the context of this program, the models illustrated Were in BPMN 2.0 notation. The ZDLC Automatically preserves the traceability of the artifacts from level 1 (of HOQ-e) down to the TiA Models. The key activities Were:

Using e-TiA: We build the simulation model based on the specification, the level prescribed by 7 of HOQ-e; e-TiA year produced accurate representation of the structural model of the Proposed Solution.

Using e-TiA: We use the pi-calculus formal compiler to verify the syntactical characteristics of the e-TiA model.

Using e-TiA: We calibrated and Validated TiA the model against the Requirements, APPROBATION in level 7.

Using e-TiA: We simulated the model against the CTQs APPROBATION in level 7 of the HOQ-e and the corrected model as required, Asking WHILST the business has vital question: "Is that what you Meant?"

Using e-TiA: Based on the corrected model, BPMN, UML State-charts and designs are generated and are Provided guidance to the development team for coding. The guidelines are unambiguous, hence reinforced the communication to the developers.

At the Validate stage of the DFSS methodology, we employed the Systemic Defect Profiler to Reduce the time taken to find the root cause of a defect. The key activities are:

Using e-SDP: The SDP debug parser of e-has-been configured to interpret the log structure implementation of technology in place, and in our context it was Pega PRPC. Note SDP-e Requires at least five attributes from the Application logs, Which are 1) time-stamp, 2) session ID, 3) source component, 4) destination component and 5) the function call.

Using SDP-e: We setup the server for e-SDP to be Executed. SDP-e is Injected with run time application logs or debug logs data reverse engineered and That Is Automatically Examined against the TiA model. SDP-e generated a defect report with description of the year enriched root cause of the defect, Malthus aiding the developer to speed up the defect fix activity.

Benefits of DFSS tools with Coupling ZDLC

Improved lifecycle THROUGHOUT Communication - The HOQ-e is a communication tool Predominantly, preserving the traceability of the Requirements down to the design specifications, available-to-any stakeholder, anywhere and anytime. The stakeholders Could Easily observe the tie-back Between requirement and business vision.

Reduced efforts to validate business requirement - Using the e-TiA models of the functions on each Stock Proposed solution can be tested against the user requirements to Ensure the business drivers are met. The efforts required for validating the Requirements are Reduced.

Reduced human errors Injected - The TiA-e models, corrected ounces, are used to generate the Automatically technical design documents. The process of the accelerated automation and documentation Avoided Injected human errors.

Reduced efforts to fix defects - SDP-e enriched the description of a defect with information related to the deviation between design time and run-time, helping the developer to trace the root cause of the defect faster than classical approach.

The Story

This story is about the motivation behind the Zero Deviation Life Cycle (ZDLC). A motivation driven by real business problems, Where projects are plagued by cost and schedule overrun and Requirements no longer resembled the gold business needs IT solutions failed to properly resolve the problems. Many methodologies Emerged, most is of Them tackled the issues of management Realising a rationalised development life cycle. Looked at the very FEW engineering units; That the shares define the quality and reliability of the end results, the hand That makes one proud of the product. There are several topics and line of thoughts written Towards the concepts of Application Lifecycle Management Within a new business dynamics. As a result, the motivation behind the ZDLC and Its origin is to propose the concept of Application Lifecycle Engineering (ALE). Because this is, management constraints cannot dictate how technical engineering are above all else and Applied, management constraints cannot sacrifice engineering methods for speed and time.

ZDLC is about FTA, or "smart ALM". It complements all ALMS by Focussing on the engineering aspects of a typical Software Development Life Cycle, Advancing technology to Employ statistical and probabilistic models, formal methods, simulation and intelligent automation speed up the process That of Developing Software WHILST augmenting quality and productivity of the process . Yet all the scientific rigor is well hidden through clever abstractions and simplified user interface and experience of the tools.

Many organizations seek to use ZDLC year in agile execution mode, automatic ZDLC Where Many of the tedious and time consuming software validation processes That may hinder agility. Goal this story is about year Which organization did not want to Implement agile wanted the goal of agility Their Existing Waterfall model (SDLC) to be Increased. This organization Shifted from year Agile execution model to Become a quality attribute of waterfall, and wanted best of both worlds. Such ZDLC thrives in environment.

We start by the business drivers of the organization Which are as follows:

To capture Requirements for projects / programs (Including new projects) more Effectively, que le Ensuring customer Receives the downstream benefits of higher quality deliverables and innovation.

To bring more agility to the current approach to project definition Waterfall and delivery, distinct from a Purely agile approach.

We were Asked to comeback with experience of helping our customers address challenges and How They contention may be applicable to the organization in question. We have beens working for some time with customers who Have had similar challenges and Concerns. The outcome and experience from thesis allowed us to commitments HAS economic development of a new platform, the Zero Deviation Life Cycle (ZDLC).

By using Cognizant's platform ZDLC We have Achieved the Following Measurable benefits:

20-25% saving in the cost of e Software Development Life Cycle (SDLC)) delivery

40% -50% reduction in the cost of quality in media and maintenance cycles

The platform ZDLC aussi Ensures a better decision-making process, leading to oa Higher degree of project success. This is Achieved through a structured, yet unrestricted, requirement gathering process, Establishing consistent communication across life cycle stages and controlled impact analysis.

ZDLC is a platform comprised of the Following That main tools:

(HOQ-e) - House of Quality enhanced

(TRIZ-e) - Theory of Inventive Problem Solving

(RMS-e) - Requirement Modeling Solution

(TiA-e) - Testable Integration Architecture n

(CPN-e) - Coloured Petri Nets

(SDP-e) - Systemic Defect Profiler

These are used by customer and joined Cognizant teams across the project life cycle. The platform is based on ZDLC some key principles to approach offers year of development Which is a scientific and quantitative means clustering of managing and measuring ever-changing requirements. This Provides the Ability for each Stock exchange requirement to be mathematically and analysed Assessed against the impact of the exchange across business processes. ZDLC HAS allowed our customers to:

instigate a Culture of Innovation Within Measurable and sustainable t the program lifecycle

track requirement through the SDLC, Providing a consistent communication mechanism

prioritizes Requirements and bring consistency across SDLC, injecting great agility into the process

Eliminate contradictions Within a solution

minimizes defects Throughout The SDLC and Thereby Reduce cost

In thesis commitments ZDLC Increases the power of modeling software applications and innovative solutions at Creating space. WHILST Cognizant HAS dedicated agile team, We Were empowered by the organization's focus to Bringing Greater agility to the current way of working waterfall Rather Than Introducing the agile methodology.

Detailed below are two examples of how agility ZDLC added to the standard Waterfall methodology ALLOWING our customers realized significant benefits to.

To Prove the benefit of the new approach was a comparison exercise Undertaken. Two streams of work started at the same Were time to solve the same problem. The objective was to gather Produce Sufficient Requirements and technical specifications to meet a project need. The team using a classic Waterfall approach Took 15 days to the full task, the team Whereas using ZDLC Took only 3 days (due to smart automation).

The second example is using e Focuses ZDLC to bring innovation to a customer's on-line platform. Were The Aims to Increase the number of functional software releases over a 12 month period from 1 to 4 and Deliver a reduction in the cost of quality. ZDLC allowed the team to Deliver 42% reduction in cost of a quality and in the first six months two functional releases Have Been Delivered putting the customer c on-track to meet Their business goals. In addition ZDLC major design flaws found 3 que les classical waterfall approach failed to identify.

The approach adds significant value ZDLC to bring rigor and agility to waterfall to agile tools and the principle has-beens Carefully crafted to Achieve this.

The Tools

The House of Quality (HOQ-e), adapted to the problem domain of IT for Requirements Engineering and business requirement traceability to Strengthen reliable communication Amongst the stakeholders of the ZDLC.

Inputs: Structured questioning, Aligned Business and Architectural Analysis, Customer decision making process engaged.

Outputs: Prioritised and dependency aware work packages and building consensus across teams.

Benefits: Auditable alignment to goals, pattern-based solution definition, strategic alignment and Powerful decision-support.

The Theory of Inventive Problem Solving (TRIZ-e) Adapted to the problem domain of IT for innovative solution-focused definition.

Inputs: HOQ analysis (re-used), prioritised list of contradictions to solve.

Outputs: Contextualised and Measurable Innovation of options.

Benefits: Directed ideation process off, That ideas generated meet reliability needs and can be n Measured before building.

Requirement Modelling Solution (RMS-e) Used to model and compile user requirements, model process flow diagram for each Stock user requirement and generate test scenarios for each Stock process flow diagram.

Inputs: HOQ analysis (re-used), prioritised list of contradictions to solve.

Outputs: Generated Software Requirement Documents (SRDS), Process Flow diagrams and Test Cases.

Benefits: Accelerated process of requirement modeling and automated the process of Generating SRD requirement and audits.

Testable Integration Architecture (TiA-e) Used for low-level consistency requirement, verification of design against Requirements and generation of artifacts Validated to drive delivery and to assist in governance.

Inputs: RMS-e artifacts (re-used) 100% transparent process and design decisions prioritised entities for communication.

Outputs: Industry-standard m testable models generated against Requirements and technical contracts.

Benefits: Auditable Requirements to alignment, notionally formal, technical contracts to drive development and testing, Earlier and more comprehensive defect detection, requirements consistency, lower cost of quality.

Coloured Petri Nets (CPN-e) Adapted to process modeling and non-Functional Requirements and simulation of models against Them.

Inputs: e-HOQ analysis (re-used), prioritised process-entities.

Outputs: Machine readable deployment model for solutions.

Benefits: Deployment model can be simulated against non-Functional Requirements, capacity planning, stress testing support, early defect detections, lower cost of quality.

Systemic Defect Profiler (SDP-e) for automated root cause analysis.

Inputs: TiA models, log files from development work streams or network layer data.

Outputs: Formal analysis from design-time to run reconciliation, sanitation logging.

Benefits: True, enabled Governance, faster root cause analysis. Much lower cost of quality / defects.

The story begins ...Background

The principles of the Zero Deviation Life Cycle (ZDLC) complement the Agile methodology. By means clustering of Employing ZDLC, we are empowered with a unique set of tools That Enables us to Achieve the Following:

Mitigate Risks Within some year Agile execution,

Reduce the manual effort,

and accelerate the overall process through smart automation.

We observe que le aforementioned objective, lead to enhancement of effective year Agile Adoption.

Key Agile Artifacts

In order to Understand the Risks Involved and challenges, we Looked at the major artifacts and tasks in a Given Agile execution and thesis are Depicted in the Following diagram. Each of the processes Requires efforts to Avoid waste and agility to speed up the end results. There are challenges and Risks That Are required to be Diagnosed and Treated.

Any Risks facing Agile Execution

There are key issues need to be Answered That in order to model the solution to Mitigate the Risks. The questions are as follows:

How do we Continuously Tie-Back User Stories to original Business Vision?

Once we have got the vision in place how does the Product Owner do Consistently Validation and Verification of user stories (user requirements)?

Backlog Grooming - How do we Continuously Prioritize User Stories?

Backlog Grooming - How do we quantify the dependency Dynamically Amongst User Stories (user requirements)?

How are we going to handle the volume of work and Manual Overhead associated with establishment and management of test cases for user stories (user requirements)?

How do we Ensure Knowledge is managed Consistently across this highly complex and distributed Program?

Any Risks facing Agile Execution

There are key issues need to be Answered That in order to model the solution to Mitigate the Risks. The questions are as follows:

How do we Continuously Tie-Back User Stories to original Business Vision?

Once we have got the vision in place how does the Product Owner do Consistently Validation and Verification of user stories (user requirements)?

Backlog Grooming - How do we Continuously Prioritize User Stories?

Backlog Grooming - How do we quantify the dependency Dynamically Amongst User Stories (user requirements)?

How are we going to handle the volume of work and Manual Overhead associated with establishment and management of test cases for user stories (user requirements)?

How do we Ensure Knowledge is managed Consistently across this highly complex and distributed Program?

Consequences of the Risks

The problem of "continuous Tie-Back of user requirements to original or User Stories Business Vision" is a constant battle to Ensure That: what is is what has-beens Delivered Asked from the business (Voice of the Customer). This process is tedious and time consuming and very Often Incorrectly Handled may result if to unyielding Developing capabilities to the business stakeholders. As a result of the validation user stories is Necessary, Which leads to the next question.

Once the vision of the business is in place, how do we instigate a process of validating and Verifying Consistently the user stories against the vision. The consequence of failing this exercise will lead to reworks as user stories will be Either 1) not reflecting the needs of the business (validation) or 2) incorrect formulation of user stories against a predefined set of best practices (verification). This exercise of validation and verification (V & V) is time consuming, and may not be thorough since V & V may be Sacrificed for speed leading to more rework and a growing backlog of the Agile product lifecycle.

Product backlog grooming is a vital activity in year Agile environment and getting this process right defines the success of delivery. There is a continuous need to treat the backlog as new user stories user stories or incorrect stream into the backlog queue. Backlog grooming is a repetitive task of re-Prioritising and re-mapping the inter-relationships of user stories so as to design the next sprints efficiently. As the Product Backlog Increases in size, the efforts required to re-prioritizes and prioritizes Increases and the human error will it increase. Incorrect leads to incorrect priority scheduling of sprints.

In the next issue of Addressing Risks, we Discuss on the problematic handling of large volume of work and manual overhead associated to the establishment and treatment of test cases for each Stock user story. This problem Hinders the flow of activities and slow downs the process of Agile. The Creation of test cases is tedious and time consuming and as seen in classical state of affairs thesis are Formulated test cases manually. The lath allows for human Injected defects in the test cases Which Requires extra effort to 1) correct the test cases and 2) keep the test cases and user stories in sync.

The last issue addresses the challenge of Consistently Ensuring Knowledge is managed across a distributed and complex program. Ideally, the perception of a Given user story in the eyes of a Business Analysts shoulds be the same for the test or the Developer and so on. It is required to Achieve a common understanding of the description of requirements. Yet the complexity of social dynamics and geographical dispersion of the program transform this activity into a very challenging and risky outcome. If the knowledge is not Correctly managed, communication Amongst peers of the development process is ambiguous and unclear, and waste resulting and to defects in the agile process. Subsequently the product backlog grows.

The consequences of thesis Risks in Agile year execution lead to waste, poor quality, low yield and growing cost to the customer. Like Any process, an Agile process aussi Subjected to entropy, and work to be done HAS to minimize the waste so que le value of agility and speed is not loss. Now the issue is: Work That Has to be done can be done manually or Either with the help of some smart tools and techniques.

Are we comfortable handling thesis Risks manually or do we agree That thesis challenges warrant a tools-based mitigation approach? If the answer is yes, then follow part 2 of the blog, Wherein we present the Zero Deviation Agile Enablement Product Life Cycle. The lath was designed to blend seamlessly process automation and formal mathematical rigor into the capability of Agile. It adds rigor to agility agility without hurting aim augmenting it.

Background

"The ZDLC (Zero Deviation Life Cycle) is a platform and suite of tools developed by Cognizant with the vision of améliorer the efficiency, quality and cost of building IT systems".

It Achieves this by enforcing the Following principles:

Directly Addressing how errors INITIALLY get Introduced into the development lifecycle WIDER

Reducing the onward communication of errors into Subsequent phases of the development lifecycle

Giving structure to Requirements gathering ALLOWING problem and solution patterns to be Formally APPROBATION - enabling Re-Use

Automating the determination of the root-cause of Remaining errors / defects in products

Introducing sustainable innovation as the share of product delivery Culture

We Recognise That There are Many contexts Where aussi Such abilities may be needed, so the ZDLC has-been designed to be:

Executed in Any style of implementation eg Iterative (RUP), Agile (SCRUM) ..

Used with Any technology-stack (eg JavaEE,. NET, Mainframe, Open Source, ..) with equal efficacité

Adopted without Needing to re-train or exchange to make traditional SDLC roles

Applied to Projects IN ANY form or stage - New Build, Migration, Transformation, .. or Planned, In-flight, Post Go-Live ..

Purchased all together or individual tools Purchased and used separately

The ZDLC Suite of Tools

A tool in the smallest entity ZDLC is ZDLC of the framework. A tool is a software That we built automata and Enforces the principles of ZDLC. A tool is designed to solve generic problems of development life cycles requirement Such as validation, checking of design artifacts for design defects and testing and performance of functions on the products. The Following table depicts the tools created to support the practice and PLC Fundamental Principles of the Framework ZDLC.

The ZDLC Product

The Products of ZDLC Were designed to resolve specific problems of IT enabled business processes. A product, in our context, is a logical grouping of the ZDLC tools (see table above), to solve a specific problem Within a customer engagement. The Following table presents The Most Popular products ZDLC That Employ the tools to resolve business problems and show Measurable business value by enacting the Fundamental Principles of ZDLC.

Zero Deviation Life Cycle: Objective Innovation (OI)

Background

Objective Innovation (OI) is a product Which employs the Zero Deviation Life Cycle (ZDLC) Framework to instigate a process of constant innovation and sustainable Within The problem year dynamics of organization. IO supports a collaborative services for Delivering innovation in business and IT for customers new That Utilizes techniques and methodologies against Purely business problems and opportunities. OI included a problem and a solution factory factory That Provide a highly focussed together (focus vs beam of idea. Idea of spectrum a) Measurable and repeatable innovation model.

The Problem Factory

The problem included two factory tools (AHP and HoQe) ZDLC of the framework. The aim of the problem is to factory Ensure the right problems are APPROBATION for innovative and creative problem solving. In order to get the right problem, a sophisticated process of validating the problem vis-a-vis the business goals and drivers is Implemented.

AHP-e: The Analytical Hierarchy Process is used for high level pair-wise prioritization of needs, wants and problems, Which Enables the subjective process of priority évaluer the weightage of arbitrary attribute more consistent and repeatable.

HOQ-e: The House of Quality enhanced, is used to drill down the problem statement from stakeholders and Their Functions using statistical methods to Provide a balanced view of the traceable wants, needs and problems. HoQe aussi identified the contradictions problems indicating indication Amongst Those problems requiring innovative solutions and Creating.

The Solution Factory

The solution included two factory tools (TRIZ and TiA) of the Framework ZDLC. The aim of the solution is to factory focus on the innovative and creative problems solving, to re-enforce and Requirements To Provide early assessment of solutions, in terms of Risks, Risks mitigation, process and exchange high level implementation plan illustrating the journey from innovative idea to solution over an existing or new technology.

TRIZ-e: The Theory of Inventive Problem Solving feeds Directly From HoQe and Provides a repeatable model to focus ideas and Reinforce the process of ideation ounce APPROBATION problem has-beens. Using TRIZ year Ensures efficient ideation process.

TiA EMail: Testable Integration Architecture, a set of tools That Ensures That architectures meet Requirements through formal testing of models against Requirements Prior to coding.

Using the OI product there is a potential saving from 50% to technical problems Requirements to contracts. In the context of innovation it Provides early assessment of Requirements and Provides solutions against re-enforcement of requirements.

Background

The challenges Involved in migrating from one technology to another are complex and plagued with cost and schedule overrun. The problem related to the very Often a fait que organization HAS Given several technologies, eg BPM technology and wants to rationalize by Consolidating onto a single product suite, but some platforms are undocumented and unclear:

10 + year old platform without proper documentation Any

Customer facing significant financial outlay unwanted if not off by platform due dates

Operations team no longer Knew ALL of underpinning functions on adhoc components with significant added

Fear of loss of service if process was 'rushed' but limited budget and Subject Matter Experts (SME) 'face time' available

The smart migration product employs the principles of the Zero Deviation Life Cycle (ZDLC) and proposed a fast and reliable approach to Mitigating the Risks Involved in technology migration programs. The key element of the approach are as follows:

Semi-automated reverse engineering of legacy system from Log Files with minimal demand on SMEs' time

Transcribed legacy system into industry-standard design testable models and simulations to test models Performed Prior to implementation

Fully automated generation of notationally Model-correct specifications (eg BPMN 2.0 and UML) to Improve the quality, correctness and efficacité Target platform to modeling

The outcome of the Implementing Smart Migration Systematically solution to infer the complete behavior of the source system through conventional means clustering Otherwise not with the objective of re-instating the complete documentation set and simulation capability for system. As a result Achieves SAFE Smart Migration lift, shift of technology and transform Within the time frames set WHILST améliorer the correctness of software Delivered Ensuring alignment to Business Goals.

Profile of the Class of Problem

There are key problems When Embarking That one faces onto Technology Migration initiatives and thesis are as follows STATED:

Both business and Engineers Have lost touch with the design of systems and the need to regain control.

Platform is complex or mission-critical and preserving a safe migration WHILST Business-as-Usual operations is hard to Achieve

Significant levels or frequent changes of Planned

Multiple daily escalations, high defect rate or 'burning platform (s)

Fragile relationships with Customers / users

Insufficient or Ineffective governance standardization processes

Non-existent, low quality or inaccurate documentation

Excessive licensing or operating Costs

Lack of standardization making remediation impractical

The Value proposition

In response to thesis problem attributes, the product employs Smart Migration to Delivering technical 'Informed' Predictability of the state of the Existing systems behavior. As a result, early detection of potential defects, makes the cost and risk of change affordable with faster, safer and higher quality execution. An innate property of the migration process is smart Enacted by the Reinstatement of accurate, up-to-date documentation Which Reduces cost of on-wards support. It bring platform 'under control' and re-enable delivery of value to the Customers / Users / Business. The long term implication of Deploying the product constitutes smart migration to the problematic of Facilitating Knowledge Transfer (KT) without significant critical SME's Demands on time and more importantly Stabilize program or project level velocity.

In summary, Smart Migration Demonstrates systematic automation of a highly manual and error-prone process to Provide a clear, accurate and reliable determination of system behavior in year Existing efficient, low risk and impact way. Through the use of simulation techniques, it tests the proper alignment of Existing system behavior to target architecture or updated models to subsequently translate the models into implementation specifications eg BPMN2.0 and UML.

The Following diagram depicts the process model of the Smart Migration product, a process to map and compare ADOPTED Voice of the machine against the Voice of the Business That is so reliable and risk-free journey of migration can be traced Systematically.

The Life Cycle Zero Deviation: Smart Architecture Modelling

Background

With The Expanding Role of IT as a key enabler to the success and growth of organizations, the typical systems increasingly complex Become HAS estate, with growing use of Specialised applications to supporting various business functions. Further, like Service Oriented Architecture Approaches Have led to the explosion in numbers year as well as complexity of interfaces Between various software components.

However IT organizations Have Realised That traditional Application Lifecycle Management (ALM) tools Rely Primarily on manually-intensive Approaches to modeling and Documenting Application behavior and interfaces. This resulted in HAS MOST architecture models Being out of date with the run-time environment generation.

The Lack of accurate, up-to-date documentation of applications, interfaces and dependencies Their HAS led to several major issues:

Increased stress and cost of planning for and managing change Any initiatives

Increased risk of budget overruns and timeline due to Reduced quality of available information.

Cognizant's ZDLC SMART Architecture and Modelling

ZDLC stands for the "Zero Deviation Life Cycle" and is a next generation SMART ALM Platform That Reduces the cost and quality of IT Increases the deliverables through mathematically Rigorous automation, returning savings of Between 20% and 25%.

Cognizant's SMART Architecture Modelling approach leveraged the platform and tools to ZDLC:

Automate the discovery of interfaces and dependencies Between applications and software components from the other run-time environment.

Provide a comprehensive platform to model and document the static as well as dynamic Behaviors of These interfaces.

Increase the Quality of architecture models by Deriving Them Directly From the run time Rather Than as a manual discovery activity.

Solution Highlights

The key highlights of the SMART Application Modelling approach are:

Other sources of run-time behavior like network sniffers and monitors can be used to enrich aussi the collaborative data.

Collaboration diagrams are then converted to architecture models That Represent all the integration issues across the estate under.

Communication is not just tracked at a point-to-point goal level across the Entire choreography of a business transaction, highlighting the entire "chain of dependency" for Any Given functions on.

Key Benefits

The SMART Architecture Modelling approach Brings the Following key benefits:

Systems interfaces can be Discovered and Documented year in efficient, low-impact way leading to a reduction in the overall efforts as well as timelines

Minimizes the dependency on SMEs' knowledge and time, ALLOWING Higher use of distributed teams

Systematic automation of a manual Traditionally and error-prone process leads to discovery Higher Quality of Information Gathered

Reduced risk of missed interfaces and dependencies, leads to more predictable outcomes

Not only re-instates accurate, up-to-date documentation purpose aussi Reduces the cost of Maintaining it in sync with future exchange

Provides accurate and interactive architecture models That: 1) Facilitate rapid simulation and change impact analysis and 2) can serve as a reliable Basis for future IT strategy, eg Application rationalization.

The waterfall model is viable for software products do not change That very much ounces They are specified. Purpose for software products That Have Their feature sets redefined falling on development of user feedback and Because other factoring, the traditional waterfall model is no longer considers.

- The EVO Evolutionary development model divides the development cycle into smaller, incremental waterfall models in Which users are ble to get access to the

product at the end of each Stock cycle.

- Feedback is provided by the users on the product schedule for the course of the next cycle and the development team Responds, Often by changing the product, plans, or processes.

- These are incremental cycles two to four weeks Typically in duration and continuous Until the product is shipped.

Benefits of Evolutionary Development Model

- Benefit not only business goal marketing results and internal operations as well.

- Use of EVO significant reduction in risk Brings for software projects.

- Reduce Costs by EVO can Providing a structured, disciplined avenue for experimentation.

- EVO allows the marketing department access to early deliveries, Facilitating development of documentation and demonstrations.

- Short, frequent cycles EVO have some distinct advantages for internal processes and people considerations.

- The cooperation and Flexibility required by each Stock EVO developer of Greater results in teamwork.

- Better fit the product to user needs and market requirements.

- Manage project risk with early cycle definition of happy.

- Uncover key issues early and careful focus Appropriately.

- Increase the opportunity to hit market windows.

- Accelerate sales cycles with early customer exposure.

- Increase management visibility of project progress.

- Increase productivity and product team motivation.

The Journey

At the Define stage of the DFSS methodology, we instigated the requirement drill down process of e-HOQ. The key activities Were:

At level 1 of HOQ-e: Stakeholder Analysis - We APPROBATION the principle roles and actors related to the transformation program eg the business users, business sponsors, and other Concerned parties. The e-HOQ year ensured exhaustive list of Roles and Responsibilities.

At level 2 of HOQ-e: We used the e-HOQ analysis to the core business functions of the stakeholders and Their Assessed against each Stock stakeholder importance, APPROBATION in level 1.

At level 3 of HOQ-e: We used the e-HOQ to inefficient drivers for Assessment of each Stock core business functions, APPROBATION in level 2.

At level 4 of HOQ-e: We used the e-HOQ to Identify high level solution to resolve the characteristics inefficient drivers, APPROBATION in level 3.

At level 5 of HOQ-e: We used the HOQ-e to start the process of the user requirements Deriving from the solution characteristics, APPROBATION in level 4.

At level 6 of the HOQ-e: We used the e-HOQ to kick off the refinement process of the user requirements, APPROBATION in level 5, into technical requirements.

Measure at the stage of the DFSS methodology, we derive the metrics (Referred to as Critical To Quality - CTQs) required to measure conformance to the quality of the Proposed SLAs gold solution. The high level quality attributes are articulated by the business stakeholders and we used the GQ (I) M to derive the share of the Measurable quality attributes Which are Critical To Quality Called (CTQs).

At level 7 of the HOQ-e: we employed the HOQ-e to the relationships entre le for Assessment Technical requirements, APPROBATION in level 6 of HOQ-e, and the CTQs derived by the GQ (I) M. At this level we how well for Assessment (defined by the CTQs) shoulds a function (defined by the technical requirement) performs. We are Malthus, ble to map the model with clear quality metrics with the technical requirements.

At the Analysis - Design stage of the DFSS methodology, we enter the design Wherein the technical requirements (level 7 of the HOQ-e) are translated into Testable Integration Architecture (TiA-e) models. These are the communication model or in terms UML component models of the Proposed solutions. In the context of this program, the models illustrated Were in BPMN 2.0 notation. The ZDLC Automatically preserves the traceability of the artifacts from level 1 (of HOQ-e) down to the TiA Models. The key activities Were:

Using e-TiA: We build the simulation model based on the specification, the level prescribed by 7 of HOQ-e; e-TiA year produced accurate representation of the structural model of the Proposed Solution.

Using e-TiA: We use the pi-calculus formal compiler to verify the syntactical characteristics of the e-TiA model.

Using e-TiA: We calibrated and Validated TiA the model against the Requirements, APPROBATION in level 7.

Using e-TiA: We simulated the model against the CTQs APPROBATION in level 7 of the HOQ-e and the corrected model as required, Asking WHILST the business has vital question: "Is that what you Meant?"

Using e-TiA: Based on the corrected model, BPMN, UML State-charts and designs are generated and are Provided guidance to the development team for coding. The guidelines are unambiguous, hence reinforced the communication to the developers.

At the Validate stage of the DFSS methodology, we employed the Systemic Defect Profiler to Reduce the time taken to find the root cause of a defect. The key activities are:

Using e-SDP: The SDP debug parser of e-has-been configured to interpret the log structure implementation of technology in place, and in our context it was Pega PRPC. Note SDP-e Requires at least five attributes from the Application logs, Which are 1) time-stamp, 2) session ID, 3) source component, 4) destination component and 5) the function call.

Using SDP-e: We setup the server for e-SDP to be Executed. SDP-e is Injected with run time application logs or debug logs data reverse engineered and That Is Automatically Examined against the TiA model. SDP-e generated a defect report with description of the year enriched root cause of the defect, Malthus aiding the developer to speed up the defect fix activity.

Benefits of DFSS tools with Coupling ZDLC

Improved lifecycle THROUGHOUT Communication - The HOQ-e is a communication tool Predominantly, preserving the traceability of the Requirements down to the design specifications, available-to-any stakeholder, anywhere and anytime. The stakeholders Could Easily observe the tie-back Between requirement and business vision.

Reduced efforts to validate business requirement - Using the e-TiA models of the functions on each Stock Proposed solution can be tested against the user requirements to Ensure the business drivers are met. The efforts required for validating the Requirements are Reduced.

Reduced human errors Injected - The TiA-e models, corrected ounces, are used to generate the Automatically technical design documents. The process of the accelerated automation and documentation Avoided Injected human errors.

Reduced efforts to fix defects - SDP-e enriched the description of a defect with information related to the deviation between design time and run-time, helping the developer to trace the root cause of the defect faster than classical approach.

The Story

This story is about the motivation behind the Zero Deviation Life Cycle (ZDLC). A motivation driven by real business problems, Where projects are plagued by cost and schedule overrun and Requirements no longer resembled the gold business needs IT solutions failed to properly resolve the problems. Many methodologies Emerged, most is of Them tackled the issues of management Realising a rationalised development life cycle. Looked at the very FEW engineering units; That the shares define the quality and reliability of the end results, the hand That makes one proud of the product. There are several topics and line of thoughts written Towards the concepts of Application Lifecycle Management Within a new business dynamics. As a result, the motivation behind the ZDLC and Its origin is to propose the concept of Application Lifecycle Engineering (ALE). Because this is, management constraints cannot dictate how technical engineering are above all else and Applied, management constraints cannot sacrifice engineering methods for speed and time.

ZDLC is about FTA, or "smart ALM". It complements all ALMS by Focussing on the engineering aspects of a typical Software Development Life Cycle, Advancing technology to Employ statistical and probabilistic models, formal methods, simulation and intelligent automation speed up the process That of Developing Software WHILST augmenting quality and productivity of the process . Yet all the scientific rigor is well hidden through clever abstractions and simplified user interface and experience of the tools.

Many organizations seek to use ZDLC year in agile execution mode, automatic ZDLC Where Many of the tedious and time consuming software validation processes That may hinder agility. Goal this story is about year Which organization did not want to Implement agile wanted the goal of agility Their Existing Waterfall model (SDLC) to be Increased. This organization Shifted from year Agile execution model to Become a quality attribute of waterfall, and wanted best of both worlds. Such ZDLC thrives in environment.

We start by the business drivers of the organization Which are as follows:

To capture Requirements for projects / programs (Including new projects) more Effectively, que le Ensuring customer Receives the downstream benefits of higher quality deliverables and innovation.

To bring more agility to the current approach to project definition Waterfall and delivery, distinct from a Purely agile approach.

We were Asked to comeback with experience of helping our customers address challenges and How They contention may be applicable to the organization in question. We have beens working for some time with customers who Have had similar challenges and Concerns. The outcome and experience from thesis allowed us to commitments HAS economic development of a new platform, the Zero Deviation Life Cycle (ZDLC).

By using Cognizant's platform ZDLC We have Achieved the Following Measurable benefits:

20-25% saving in the cost of e Software Development Life Cycle (SDLC)) delivery

40% -50% reduction in the cost of quality in media and maintenance cycles

The platform ZDLC aussi Ensures a better decision-making process, leading to oa Higher degree of project success. This is Achieved through a structured, yet unrestricted, requirement gathering process, Establishing consistent communication across life cycle stages and controlled impact analysis.

ZDLC is a platform comprised of the Following That main tools:

(HOQ-e) - House of Quality enhanced

(TRIZ-e) - Theory of Inventive Problem Solving

(RMS-e) - Requirement Modeling Solution

(TiA-e) - Testable Integration Architecture n

(CPN-e) - Coloured Petri Nets

(SDP-e) - Systemic Defect Profiler

These are used by customer and joined Cognizant teams across the project life cycle. The platform is based on ZDLC some key principles to approach offers year of development Which is a scientific and quantitative means clustering of managing and measuring ever-changing requirements. This Provides the Ability for each Stock exchange requirement to be mathematically and analysed Assessed against the impact of the exchange across business processes. ZDLC HAS allowed our customers to:

instigate a Culture of Innovation Within Measurable and sustainable t the program lifecycle

track requirement through the SDLC, Providing a consistent communication mechanism

prioritizes Requirements and bring consistency across SDLC, injecting great agility into the process

Eliminate contradictions Within a solution

minimizes defects Throughout The SDLC and Thereby Reduce cost

In thesis commitments ZDLC Increases the power of modeling software applications and innovative solutions at Creating space. WHILST Cognizant HAS dedicated agile team, We Were empowered by the organization's focus to Bringing Greater agility to the current way of working waterfall Rather Than Introducing the agile methodology.

Detailed below are two examples of how agility ZDLC added to the standard Waterfall methodology ALLOWING our customers realized significant benefits to.

To Prove the benefit of the new approach was a comparison exercise Undertaken. Two streams of work started at the same Were time to solve the same problem. The objective was to gather Produce Sufficient Requirements and technical specifications to meet a project need. The team using a classic Waterfall approach Took 15 days to the full task, the team Whereas using ZDLC Took only 3 days (due to smart automation).

The second example is using e Focuses ZDLC to bring innovation to a customer's on-line platform. Were The Aims to Increase the number of functional software releases over a 12 month period from 1 to 4 and Deliver a reduction in the cost of quality. ZDLC allowed the team to Deliver 42% reduction in cost of a quality and in the first six months two functional releases Have Been Delivered putting the customer c on-track to meet Their business goals. In addition ZDLC major design flaws found 3 que les classical waterfall approach failed to identify.

The approach adds significant value ZDLC to bring rigor and agility to waterfall to agile tools and the principle has-beens Carefully crafted to Achieve this.

The Tools

The House of Quality (HOQ-e), adapted to the problem domain of IT for Requirements Engineering and business requirement traceability to Strengthen reliable communication Amongst the stakeholders of the ZDLC.

Inputs: Structured questioning, Aligned Business and Architectural Analysis, Customer decision making process engaged.

Outputs: Prioritised and dependency aware work packages and building consensus across teams.

Benefits: Auditable alignment to goals, pattern-based solution definition, strategic alignment and Powerful decision-support.

The Theory of Inventive Problem Solving (TRIZ-e) Adapted to the problem domain of IT for innovative solution-focused definition.

Inputs: HOQ analysis (re-used), prioritised list of contradictions to solve.

Outputs: Contextualised and Measurable Innovation of options.

Benefits: Directed ideation process off, That ideas generated meet reliability needs and can be n Measured before building.

Requirement Modelling Solution (RMS-e) Used to model and compile user requirements, model process flow diagram for each Stock user requirement and generate test scenarios for each Stock process flow diagram.

Inputs: HOQ analysis (re-used), prioritised list of contradictions to solve.

Outputs: Generated Software Requirement Documents (SRDS), Process Flow diagrams and Test Cases.

Benefits: Accelerated process of requirement modeling and automated the process of Generating SRD requirement and audits.

Testable Integration Architecture (TiA-e) Used for low-level consistency requirement, verification of design against Requirements and generation of artifacts Validated to drive delivery and to assist in governance.

Inputs: RMS-e artifacts (re-used) 100% transparent process and design decisions prioritised entities for communication.

Outputs: Industry-standard m testable models generated against Requirements and technical contracts.

Benefits: Auditable Requirements to alignment, notionally formal, technical contracts to drive development and testing, Earlier and more comprehensive defect detection, requirements consistency, lower cost of quality.

Coloured Petri Nets (CPN-e) Adapted to process modeling and non-Functional Requirements and simulation of models against Them.

Inputs: e-HOQ analysis (re-used), prioritised process-entities.

Outputs: Machine readable deployment model for solutions.

Benefits: Deployment model can be simulated against non-Functional Requirements, capacity planning, stress testing support, early defect detections, lower cost of quality.

Systemic Defect Profiler (SDP-e) for automated root cause analysis.

Inputs: TiA models, log files from development work streams or network layer data.

Outputs: Formal analysis from design-time to run reconciliation, sanitation logging.

Benefits: True, enabled Governance, faster root cause analysis. Much lower cost of quality / defects.

The story begins ...Background

The principles of the Zero Deviation Life Cycle (ZDLC) complement the Agile methodology. By means clustering of Employing ZDLC, we are empowered with a unique set of tools That Enables us to Achieve the Following:

Mitigate Risks Within some year Agile execution,

Reduce the manual effort,

and accelerate the overall process through smart automation.

We observe que le aforementioned objective, lead to enhancement of effective year Agile Adoption.

Key Agile Artifacts

In order to Understand the Risks Involved and challenges, we Looked at the major artifacts and tasks in a Given Agile execution and thesis are Depicted in the Following diagram. Each of the processes Requires efforts to Avoid waste and agility to speed up the end results. There are challenges and Risks That Are required to be Diagnosed and Treated.

Any Risks facing Agile Execution

There are key issues need to be Answered That in order to model the solution to Mitigate the Risks. The questions are as follows:

How do we Continuously Tie-Back User Stories to original Business Vision?

Once we have got the vision in place how does the Product Owner do Consistently Validation and Verification of user stories (user requirements)?

Backlog Grooming - How do we Continuously Prioritize User Stories?

Backlog Grooming - How do we quantify the dependency Dynamically Amongst User Stories (user requirements)?

How are we going to handle the volume of work and Manual Overhead associated with establishment and management of test cases for user stories (user requirements)?

How do we Ensure Knowledge is managed Consistently across this highly complex and distributed Program?

Any Risks facing Agile Execution

There are key issues need to be Answered That in order to model the solution to Mitigate the Risks. The questions are as follows:

How do we Continuously Tie-Back User Stories to original Business Vision?

Once we have got the vision in place how does the Product Owner do Consistently Validation and Verification of user stories (user requirements)?

Backlog Grooming - How do we Continuously Prioritize User Stories?

Backlog Grooming - How do we quantify the dependency Dynamically Amongst User Stories (user requirements)?

How are we going to handle the volume of work and Manual Overhead associated with establishment and management of test cases for user stories (user requirements)?

How do we Ensure Knowledge is managed Consistently across this highly complex and distributed Program?

Consequences of the Risks

The problem of "continuous Tie-Back of user requirements to original or User Stories Business Vision" is a constant battle to Ensure That: what is is what has-beens Delivered Asked from the business (Voice of the Customer). This process is tedious and time consuming and very Often Incorrectly Handled may result if to unyielding Developing capabilities to the business stakeholders. As a result of the validation user stories is Necessary, Which leads to the next question.